Legal representatives for a now 20-year-old woman initiated a pivotal trial in Los Angeles, asserting that Instagram and YouTube were intentionally crafted to be addictive, inflicting severe damage on her mental health.

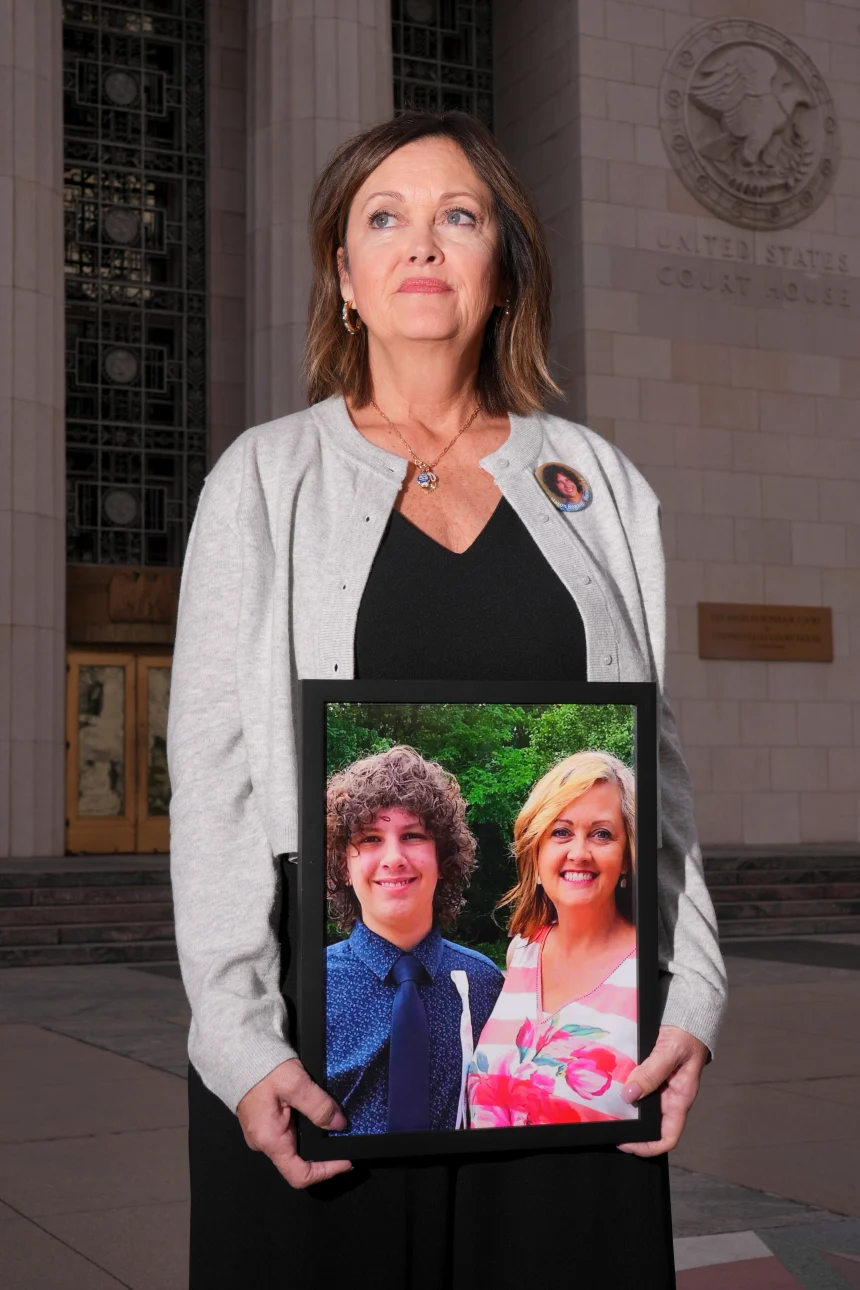

The lawsuit is the first among many expected to be litigated, centering on a young woman referred to as Kaley, or by her initials KGM. Kaley along with her mother, accuse the tech giants of purposely creating addictive platforms that resulted in anxiety, body dysmorphia, and suicidal ideation.

Mark Lanier, representing Kaley, addressed the jury, likening social media platforms to “digital casinos.” He posited that features like endless scrolling are specifically designed to activate dopamine responses, thereby fostering addiction.

“This case pivots on two of the wealthiest corporations that have engineered addiction in children's minds,” Lanier articulated. “For a child like Kaley, swiping is akin to pulling the lever of a slot machine. However, every swipe is not for currency, but for mental engagement.”

In defense, attorneys for the tech companies contended that Kaley's mental health difficulties were fundamentally linked to her challenging family environment, rather than her use of social media platforms, suggesting that these platforms could have provided a creative and emotional outlet.

The proceedings have garnered considerable attention from parents and advocates for child safety, who view this trial as a crucial opportunity for accountability within the social media industry. Senior executives from major tech companies are anticipated to provide testimony in the forthcoming weeks.

The verdict could influence how approximately 1,500 similar pending lawsuits are resolved. Potential financial repercussions could lead to billions in damages for the companies and necessitate alterations to the design of their platforms.

Kaley has also lodged claims against Snap and TikTok, both of which struck settlements prior to the trial but remain defendants in other lawsuits.

The companies have consistently denied any adverse impact their platforms may have on young users, pointing to safety initiatives such as parental controls, time reminders, and content restrictions as evidence of their commitment to user protection.

One defense lawyer highlighted that Kaley’s mental health issues predated significant social media engagement, citing testimonies from therapists indicating that social media was not a primary contributor to her distress. Testimonies indicated that Kaley never expressed feelings of addiction towards Instagram, and social media was not a recurring topic in her therapy sessions.

Conversely, Lanier referenced internal documents from the companies that purportedly reveal intentional strategies to attract minors. He noted tactics that promote early usage, autoplay features, infinite scrolling, “like” buttons encouraging validation-seeking actions, and beauty filters that modify personal appearance.

Records submitted in court indicate that Kaley began using YouTube at the age of six and Instagram at nine, at times spending numerous hours on these platforms. Included in the evidence was her record of spending over 16 hours on Instagram within a single day when she was 16 years old.

Lanier also mentioned an internal study he claimed demonstrated that children undergoing emotional distress are more prone to addiction, and that parents frequently struggle to curb such behavior.

“The minute Kaley became absorbed in the platform, her mother became excluded,” he stated. In her lawsuit, Kaley further alleged experiencing cyberbullying and sextortion on Instagram.

The defense underscored recent additions to platform features aimed at user protection, including content moderation tools, notification restrictions, and sleep modes, arguing these implementations signify a commitment to safeguarding young users.

Jurors have been instructed not to conduct any case-related research or modify their online behaviors throughout the trial. They are to focus on whether specific design features of the platforms contributed to mental health consequences, rather than holding the companies accountable for third-party content.

Comments (0)

You must be logged in to comment.

Be the first to comment on this article!